Guest post: How to assess regional climate information for adaptation planning

Guest authors

09.21.21

Guest authors

21.09.2021 | 2:59pmFrom heatwaves and wildfires, to floods and rainfall-induced landslides, the northern-hemisphere summer of 2021 has seen a brutal series of extreme weather events.

While climate change is often discussed at the global scale, its impacts are already observable at regional and local scales. With projections showing that regional climates can change faster than the global average, the lack of preparation by governments around the world brings the need for adaptation planning into sharp focus.

Fortunately, there is a deluge of regional climate information available. The first part of the new assessment report from the Intergovernmental Panel on Climate Change (IPCC), for example, has four chapters that focus on regional information.

However, this volume of information means we need better ways of assessing its quality for informing long-term planning under a changing climate.

For example, this summer’s weather has led to concerns from some scientists over the ability of climate models to simulate the severity of extremes seen. Other scientists have pushed back, pointing out that the events have been remarkable, but within the range of what climate models have predicted.

In a paper, published in the Bulletin of the American Meteorological Society earlier this year, we developed a framework for assessing the quality of regional climate information. In this article, we unpack the framework and how it can be applied.

Local and regional impacts

As its name suggests, global warming is a worldwide phenomenon. However, its impacts are generally felt at the local or regional level.

Therefore, in order to make long-term decisions around adaptation, decision-makers need reliable information about how the local and regional climate will change. However, there are several reasons why this is less than straightforward.

First, this information has a high degree of uncertainty. While the science behind global warming is robust, there are still open questions about how this will affect local weather patterns. For example, scientists know that a warming climate will bring more frequent and intense rainfall events, but just how the frequency and intensity will vary in specific locations is still being explored.

Second, the way that scientists validate climate models is particularly tricky for regional models. Scientists typically check their models by running “hindcast” simulations of past climate and comparing the results against observed data. However, the quality and availability of observed data can vary, depending on the part of the world.

Similarly, comparisons against palaeoclimate data from the Earth’s distant past are more difficult for specific local and regional climates. In any case, testing of model output against past data is only a partial assessment of the model (pdf).

Third, the sources of information are rapidly multiplying: from publicly funded climate data to private climate services. But it is unclear how decision-makers in the public and private sectors can evaluate what information is good enough for their purposes.

These issues have prompted us to set up an interdisciplinary research group to address what it means for information to be suitable for adaptation decision-making. Drawing from the insights of environmental social science, philosophy of science and physical climate science, we aim to tackle the overarching question: how can we assess the quality of regional climate information about the future?

Assessing information quality

To answer this question, we developed a framework for assessing the quality of information about future regional climate.

This framework specifies the different aspects of information that need to be taken into consideration for assessing its quality. These include:

- The types of evidence that are taken into consideration when making an estimate about future changes in regional climate;

- The method that is used to process and analyse the evidence; and

- The type of statement or estimate that is made to communicate this information.

There are two main reasons why it is important to consider all these elements of the information.

First, the way that quality is defined in this framework is in terms of how good our explanations are of why we think the statement or estimate about future climate is likely to be true – known in the jargon as “epistemic reliability”. Explanations are rooted in scientific methodology, so we need to look at the evidence and the methods used by the scientists to produce this information.

Second, there is a complex relationship between the explanations we have for a particular statement and how precise this statement can be. For example, does my evidence and method warrant a probabilistic estimate, or can I just provide a range of expected outcomes? These are elements of the information production process that are crucial for quality.

The framework itself, then, captures the dimension of the scientific process that contributes to statements that meet the definition of quality by helping to answer the following question: are the evidence and methods adequate for the statements to be epistemically reliable? These dimensions are transparency, theory, number, independence, comprehensiveness and historical empirical adequacy.

Transparency ensures that the data and the methodology are thoroughly explained, available and traceable. Theory assesses the strength of the theoretical underpinning of the statements or estimates about future regional climate and the methodology used to derive them. Number, independence and comprehensiveness, respectively, assess how many different types of evidence – such as model output, expert judgement and observations – are taken into consideration, the provenance and relationship of them – for example, are they all from the same research centre? – and how much of the uncertainty related to each type of evidence is explored. Together, these dimensions contribute to a comprehensive uncertainty assessment. Finally, historical empirical adequacy evaluates the extent to which output from models has been compared to past data.

Framework in practice

To demonstrate how the framework can assess quality and to test the framework itself, our group then applied the framework to the UK Climate Projections 2018 (UKCP18), a set of climate change projections produced specifically for the UK.

UKCP18, produced by the Met Office Hadley Centre, is a climate analysis tool designed to provide state-of-the-art information about future regional climate to support decision-making.

Three of its main products include “probabilistic” projections – meaning each projection has a likelihood attached to it – for different aspects of the UK’s climate, along with a set of global and regional projections. These are explained in more detail in Carbon Brief’s in-depth Q&A.

The application of our framework reveals strengths and weaknesses in these three products, and can provide a pathway for improvement for current and future products.

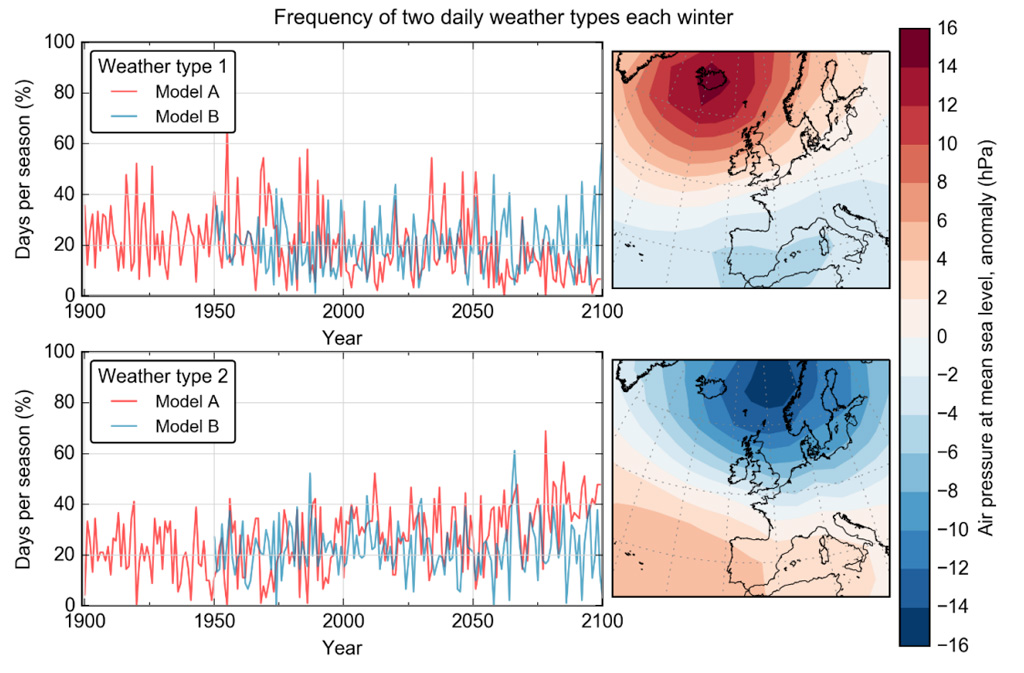

For example, the global projections have good theoretical support: model output is analysed in terms of its relation to synoptic systems and the type of weather these systems are associated with. You can see this in the figure below, taken from the UKCP18 science overview report (pdf), which shows how different models relate a particular weather type over the UK – negative (top) and positive (bottom) phases of the North Atlantic Oscillation (NAO) – to synoptic systems over the Atlantic Ocean.

In contrast, the probabilistic projections have the weakest theoretical support of the UKCP18 products as it is unclear exactly what kind of information the probabilities represent. The approach used to generate the probability distributions is a Bayesian framework that represents the scientists’ beliefs about the system. Yet, in the science report, it is explicitly mentioned that the probabilities are not representing beliefs of the scientists involved in UKCP18.

Elsewhere, the regional projections have good historical empirical adequacy: as explained in the land projection science report, model output is extensively tested against historical observations.

However, there is little transparency across all types of projections. For example, the science report and the “caveats and limitations” document explain that model output is conditional on modelling choices, but it does not explain what this entails for epistemic reliability and the extent of epistemic uncertainty.

One consequence of this analysis for decision-makers is that since the information does not satisfy all quality dimensions, there are doubts on the fitness of the products for the purpose of supporting adaptation decisions.

For example, how can probabilistic information be integrated into decision-making if it is unclear what these probabilities represent? Do these probabilities capture enough of the relevant uncertainties? A clearer formulation of what this information represents and what its limitations are may help answering these questions. The quality dimensions of the assessment framework can therefore also be a guide for scientists who want to produce decision-relevant information.

This research can be extended by using the framework to evaluate the quality of other nationally produced products about future regional climate for supporting adaptation decisions. It can also be used to develop new information that is of higher quality.

Furthermore, there is the potential for end users to explore the usability of this framework. For example, while it is intended to provide guidelines for non-experts about what elements of the scientific process can contribute to high-quality regional climate information, the extent to which this framework can be used by users of information beyond academia has not yet been tested. We hope to address these remaining questions in future research.

Baldissera Pacchetti, M. et al. (2021) Assessing the quality of regional climate information, Bulletin of the American Meteorological Society, doi:10.1175/BAMS-D-20-0008.1.

-

Guest post: How to assess regional climate information for adaptation planning